Claude Finally Finishes What He Starts

How Opus 4.6 turns Claude into the teammate who actually follows through

If your AI has great ideas and zero follow-through, Opus 4.6 just fixed that.

Here’s what used to happen: you’d start a conversation with Claude, and the first few responses would be sharp and focused. Claude knew exactly what you asked for. Then somewhere along the way, things would start to get fuzzy. Claude would start repeating himself, or worse, quietly abandon the plan you’d been building together for the last hour. And the longer the chat got, the more Claude drifted.

That era is over.

With Opus 4.6, Claude finally remembers your goals, keeps the plan intact, and actually follows through. He’s not just generating text anymore, now he’s tracking objectives, managing the work, and delivering results without dropping the thread halfway through.

What Makes Opus 4.6 Different

Most model updates improve how the AI writes. This one improves how it works. The difference matters because it determines whether Claude is a tool you use or a teammate you delegate to.

Keep the Conversation Going

Opus 4.6 handles context limits differently. Instead of hitting a limit and “forgetting,” Claude summarizes and compacts his memory. The critical details stay and the noise gets filtered out. So you can run long-term projects in a single thread without the model losing the point.

Automatic Effort Control

Opus 4.6 decides when to think deeply. It knows the difference tasks and applies the right amount of effort to your task automatically.

Claude now explicitly maintains goals and constraints across long interactions, instead of treating each response as a fresh start.

True Parallel Workflows

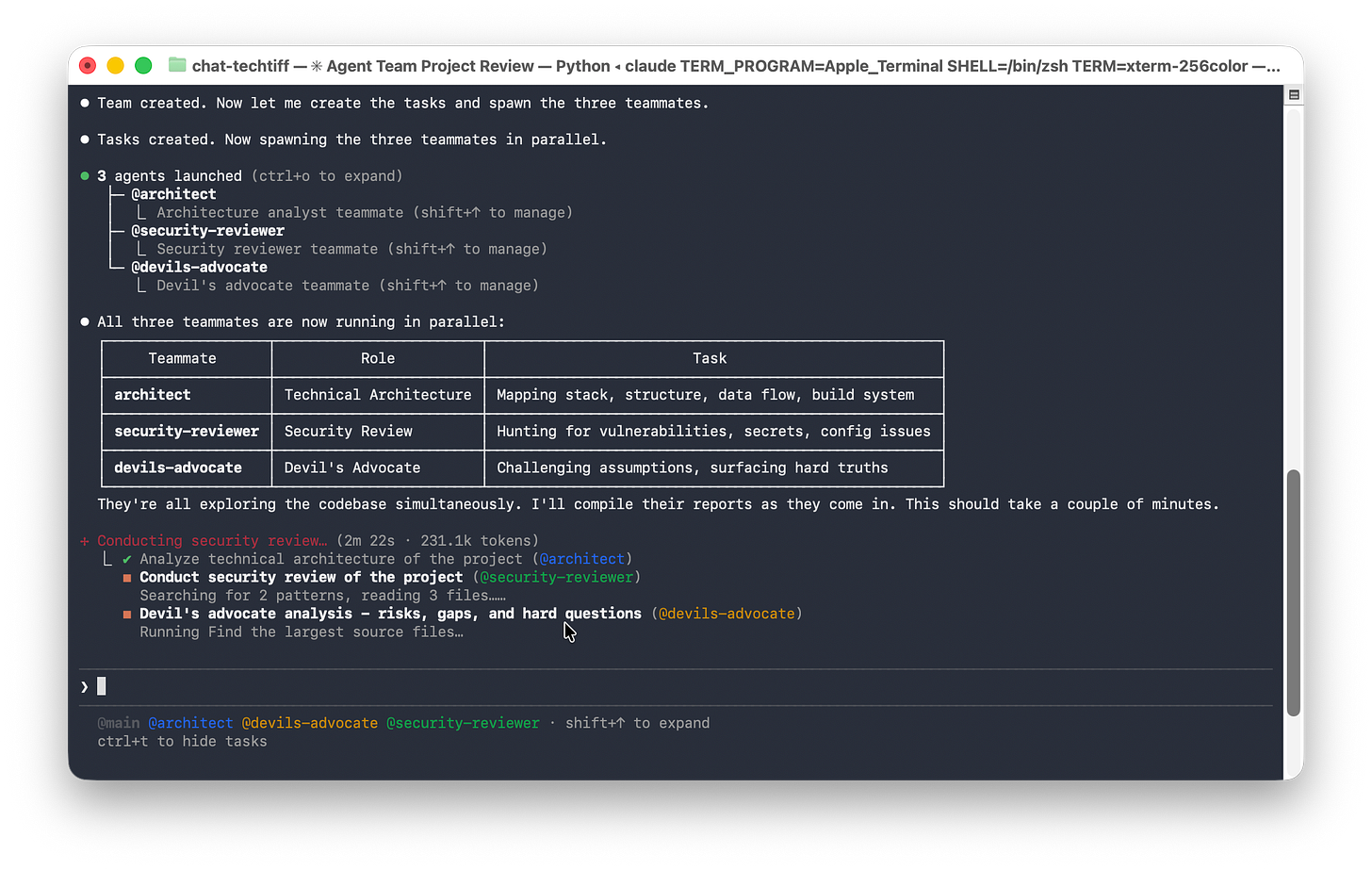

Reviewing a complex project? Claude can split the work across multiple tracks at once: one agent pressure-tests assumptions, another organizes and documents decisions, while a third looks for gaps or risks you might miss.

Claude Code Agent Teams

Agent teams let you coordinate multiple Claude Code instances together. Unlike subagents, you can also interact with individual teammates directly without going through the lead.

I tested this on an old project I hadn’t touched in a while. Claude Code spun up an agent team and came back with a clear technical plan, security review, and a list of risks I would’ve missed if I’d checked it myself. That’s when it clicked: this isn’t about better answers. It’s about delegation that actually holds.

The Sycophancy Fix

Opus 4.6 has the lowest rates of sycophancy and deception of any model to date. Translation: Claude isn’t there to gas you up or nod along while things slowly go off the rails.

When an AI is too eager to agree, it starts optimizing for your approval instead of the outcome, and that’s how bad assumptions survive longer than they should.

With lower sycophancy and deception, Claude is more willing to hold its ground when something doesn’t work, doesn’t make sense, or isn’t ready yet. That’s what makes it usable for real work.

These capabilities reinforce each other in practice. Context compaction preserves the important information so the system can carry a project forward without losing its footing. Adaptive thinking controls how much effort is applied at each stage, preventing overanalysis and shallow responses.

Agent teams make it possible to run multiple parts of the work simultaneously. The sycophancy fix ensures that none of this output is shaped by a desire to please you instead of telling you the truth.

The Integrity Update

A capable teammate is only as valuable as their honesty. And if you’re going to hand Claude real work, you need to know his output is aligned with your interests and nobody else’s.

This Sunday, Anthropic is running their first-ever Super Bowl ad. But unlike every other company buying airtime, they aren’t trying to sell you a product. They’re buying the most expensive 30 seconds in television to tell you they won’t sell you anything.

Their pledge: Claude will remain ad-free.

That commitment matters because of where AI is headed right now. We’re entering the era of AI agents (AI that doesn’t just answer your questions but acts on your behalf). AI is booking flights, researching vendors, comparing products and making purchasing recommendations.

Think of it like this: if your AI assistant is helping you book flights, you need to know it’s choosing the best flight, not the sponsored airline.

Ad-free is a structural decision about whose interests the AI serves.

When you delegate real work to an AI agent, you need to know the agent is working for you and only you.

Putting Claude to Work on Your Projects

Opus 4.6 gave Claude the ability to carry a project from beginning to end.

Claude delivers his best work when you set it up with clear expectations and give the responsibility to audit his own output before it reaches your desk.

These approaches aren’t prompting tricks.

They’re how you collaborate with an AI that’s capable of doing more than answering one question at a time.

Define What Done Looks Like

When you hand Claude a project, include what the finished result needs to be. Give it the audience, the tone, the length, what a successful outcome looks like, and what to do if he hits something ambiguous.

Include language like this in your prompt:

Your task is to deliver the complete, ready-to-send result.

It must be accurate, consistent, and aligned with the stated goal.

If any requirement is ambiguous or conflicts with earlier constraints, stop and flag it before continuing. Do not guess.This tells Claude to plan the work before he starts producing. He maps out the steps, identifies where things might go sideways, and works toward a defined outcome instead of generating content until you tell it to stop.

Build on One Conversation

Claude holds context across long interactions, which means every follow-up builds on the same foundation without you repeating yourself. Your brand voice, your goals, your constraints, and your earlier decisions all carry forward. That accumulated context is an asset.

Break big projects into sequential steps inside one thread. Each step inherits everything Claude already knows about the project. And if you want to reinforce that continuity, drop this into the conversation:

Everything we’ve discussed so far still applies.

If newer instructions conflict with earlier ones, call out the conflict and ask which should win before proceeding.Make Claude Review its Own Work

Before Claude hands you the final result, make him audit the response. Ask Claude to check its output against your original goals, flag anything that feels uncertain, and tell you where he might be wrong, then to fix it.

Your prompt:

Before you deliver, audit your work against the original goals.

Identify what’s solid, what’s risky, and where you might be wrong.

Rank issues by severity, fix the high-risk ones, then deliver the final result.Nothing reaches you until it’s passed Claude’s own quality check. That means fewer errors making it through, fewer hallucinations slipping past, and more work that arrives finished the way you intended. You’re reviewing polished output, not catching mistakes that should have been caught before they hit your desk.

Beyond the Chat Window

Claude now works directly inside Excel and PowerPoint and is handling multi-step spreadsheet changes, working with unstructured data, and building presentations that maintain your brand consistency across layouts. The same intelligence that carries a project in conversation carries it inside the tools you already use every day.

Claude Finishes What He Starts

Opus 4.6 gave Claude the memory to hold an entire project in context, the judgment to apply the right depth of thinking at every step, the ability to run multiple tasks simultaneously, and the honesty to push back when something in your plan doesn’t work.

On top of that, Anthropic committed to keeping the whole system ad-free, so every recommendation and every output is aligned with your goals alone.

Try Opus 4.6 on your next real project. Give Claude the full brief, define what done looks like, and let your AI carry it through. See what happens when you start delegating like you would to any capable person on your team.

That’s the real upgrade: a teammate you can trust with the work.

The follow-through problem you identify is exactly why multi-agent setups become necessary. One agent loses focus on long workflows. But when you split work across specialized agents with clear handoffs, each maintains context in its domain. I've been running parallel agent teams and the difference in completion rates is dramatic. Single agents drift. Coordinated agents deliver. I wrote about the specific coordination patterns that worked: https://thoughts.jock.pl/p/opus-4-6-agents-test

I have experienced this, I must say very impressed.